Make Your AI Operations Visible.

Real-time monitoring of LLM/AI agent execution logs, costs, and performance.

Get started with just 3 lines of code.

Running AI Apps? Facing These Challenges?

Identify root causes and share insights easily.

API costs are rising, but you can't identify which feature is responsible

LLM errors in production go unnoticed for too long

Responses are slow, but you can't identify the bottleneck

Unable to review what was responded to users after the fact

No way to share AI usage metrics with your team

AITracer Solves These!

Four key features for complete AI operations support

Complete Log Recording

- Record all inputs and outputs

- Auto-track tokens and costs

- Trace IDs for debugging

Cost Visualization

- Daily and per-model cost tracking

- Budget alerts before overspending

- Compare cost efficiency

Performance Monitoring

- Real-time latency measurement

- P50/P99 percentile analysis

- Identify bottlenecks

Alert Notifications

- Instant error rate detection

- Slack, Email, Webhook support

- Flexible threshold customization

Get Started in Just 3 Lines

Integrate with minimal changes to your existing code

tracer = AITracer(api_key="at-xxxx")

client = tracer.wrap_openai(OpenAI())

# That's it! All API calls are now automatically loggedIntuitive Dashboard for Clear Insights

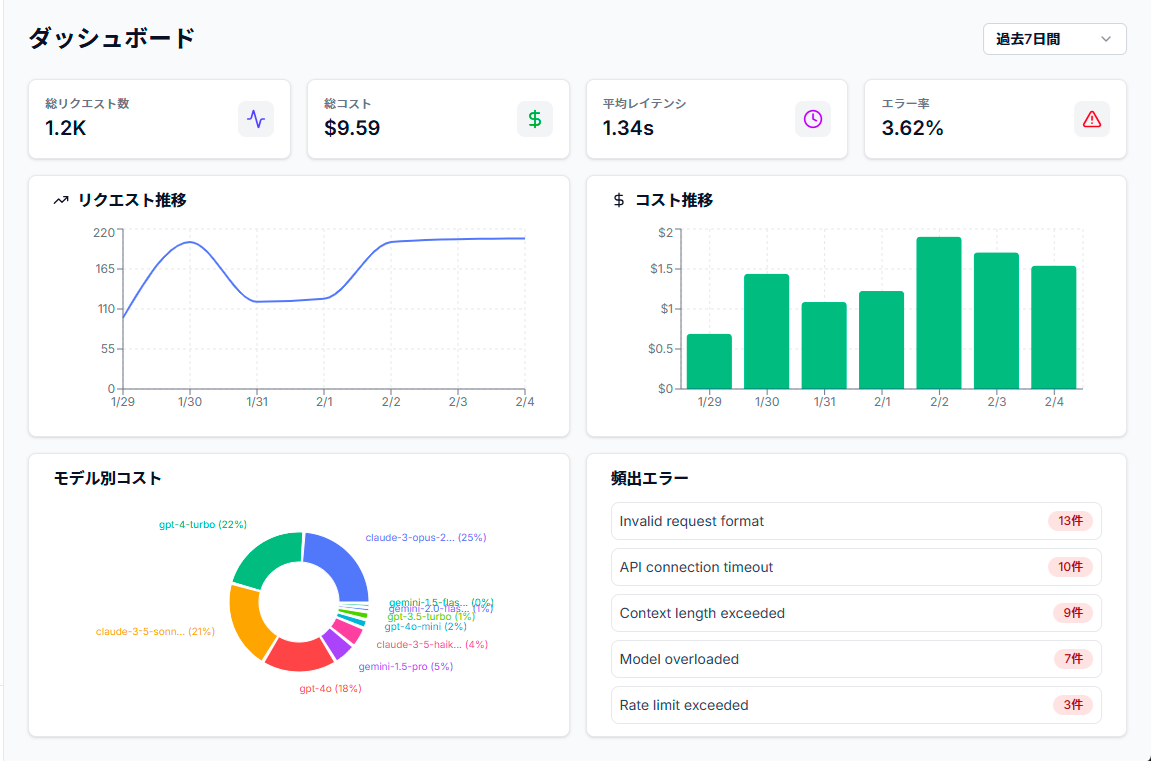

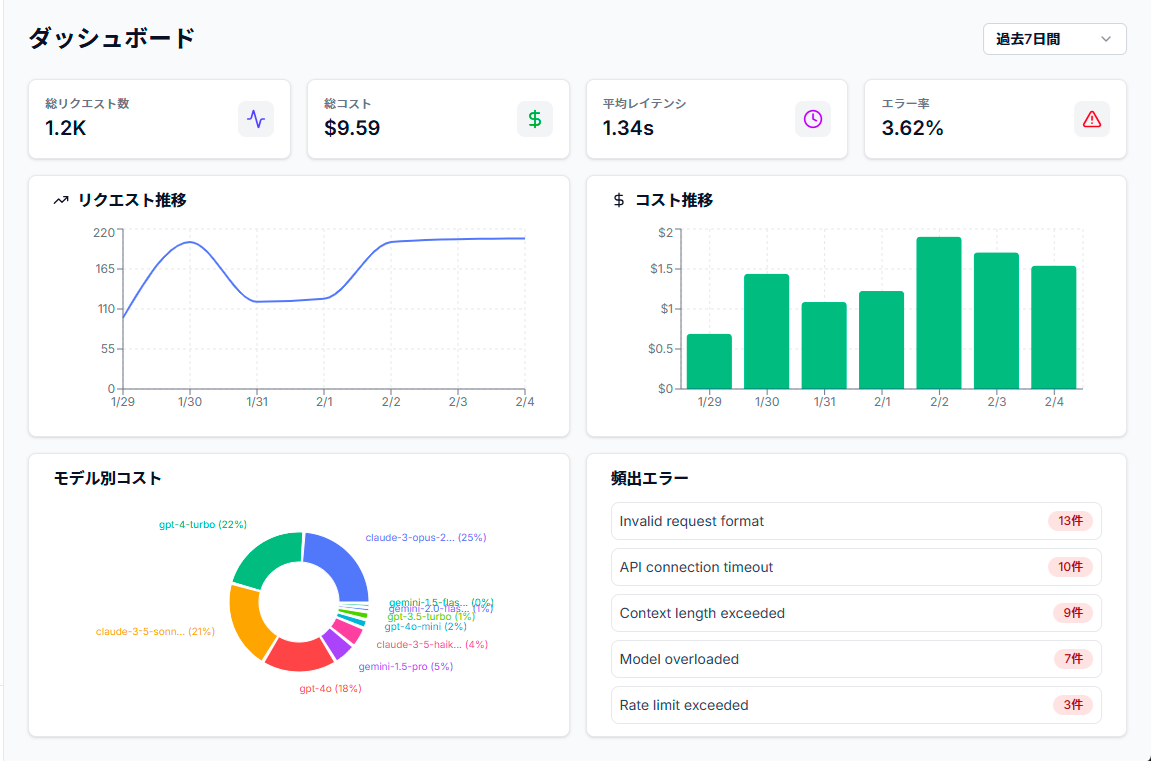

View request counts, costs, and latency in real-time

Simple Pricing

Plans that scale from startups to enterprise

For personal dev & testing

- ✓ 1,000 logs/month

- ✓ 7-day data retention

- ✓ 1 user

- ✓ 3 alert rules

- ✓ Email notifications

For small teams

- ✓ 10,000 logs/month

- ✓ 30-day data retention

- ✓ 5 users

- ✓ 10 alert rules

- ✓ Webhook support

For growing teams

- ✓ 100,000 logs/month

- ✓ 90-day data retention

- ✓ Unlimited users

- ✓ 50 alert rules

- ✓ Slack integration

For large organizations

- ✓ Unlimited logs

- ✓ Custom data retention

- ✓ Unlimited users

- ✓ Unlimited alerts

- ✓ SSO support

- ✓ 99.95% SLA

Supports Major AI Providers

Monitor your LLMs as-is

Coming Soon: Azure OpenAI / AWS Bedrock / Cohere

Secure by Design

Your data is protected with enterprise-grade security

Encryption

TLS 1.3

PII Detection

Auto-masking of personal data

Audit Logs

All operations recorded

Data Protection

GDPR compliant

Availability

99.9% SLA

Frequently Asked Questions

How much do I need to change my existing code?+

Just wrap your OpenAI client - your existing code works as-is. Changes are typically just a few lines.

What's the impact on my app's performance?+

With async processing, the overhead is less than 3ms. Safe to use in production environments.

Do you offer on-premise deployment?+

Yes, available with the Enterprise plan. Please contact us for details.

What are the Free plan limitations?+

The Free plan includes 1,000 logs per month, 7-day data retention, and 1 user.

Which LLM providers are supported?+

We support OpenAI, Anthropic (Claude), and Google Gemini. LangChain is also supported.

Where is my data stored?+

Data is stored in AWS Tokyo region (ap-northeast-1). Enterprise plans can choose custom regions.